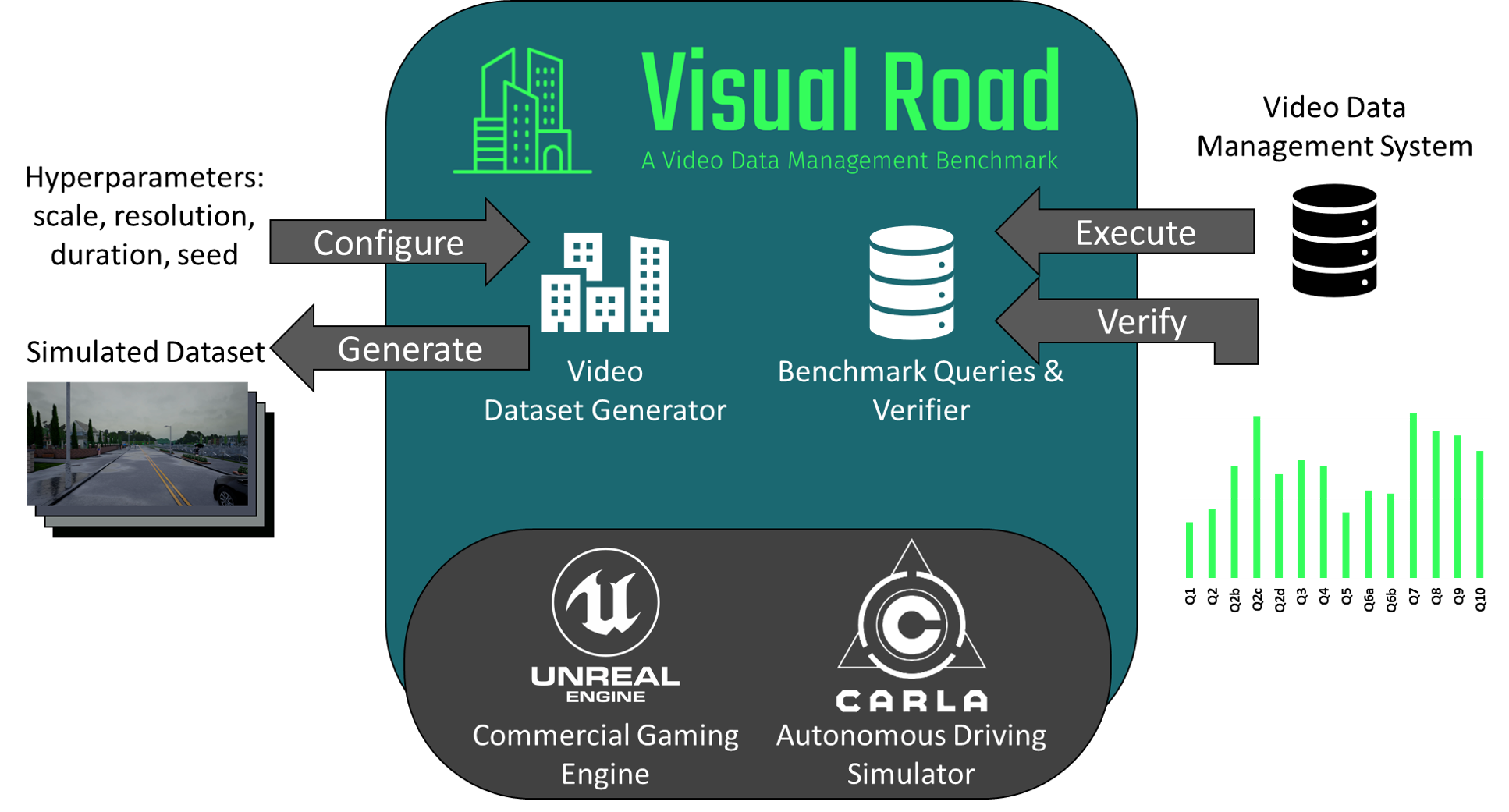

Video database management systems (VDBMSs) have recently re-emerged as an active area of research and development. To accelerate innovation in this area, we present Visual Road, a benchmark that evaluates the performance of these systems. Visual Road comes with a dataset generator and a suite of benchmark queries over cameras positioned within a simulated metropolitan environment. Visual Road's video data is automatically generated with a high degree of realism, and annotated using a modern simulation and visualization engine. This allows for VDBMS performance evaluation while scaling up the size of the input data.

Visual Road is designed to evaluate a broad variety of VDBMSs: real-time systems, systems for longitudinal analytical queries, systems processing traditional videos, and systems designed for 360◦ videos. Visual Road relies on the Unreal Engine for physical simulation and rendering, and the Carla simulator as a back-end engine (including its assets, geographic elements, and actor automation logic).

The following videos are representative of the traffic cameras found in a synthetic Visual Road dataset:

| Name | Scale | Resolution | Duration | Version | Configuration | Download |

|---|---|---|---|---|---|---|

| 1K-Short-1 | 1 | 1K (960x540) | 15 min | 1 | Link | 1k-short.tar.gz |

| 1K-Short-2 | 2 | 1K (960x540) | 15 min | 1 | Link | 1k-short-2.tar.gz |

| 1K-Long-4 | 4 | 1K (960x540) | 60 min | 1 | Link | 1k-long-4.tar.gz |

| 2K-Short-2 | 2 | 2K (1920x1080) | 15 min | 1 | Link | 2k-short-2.tar.gz |

| 4K-Short-1 | 1 | 4K (3840x2160) | 15 min | 1 | Link | 4k-short.tar.gz |

If you’ve generated a dataset and would like to share its configuration with the world, please post it here with the details! To view a list of dataset configurations, please click here.

Brandon Haynes, Amrita Mazumdar, Magdalena Balazinska, Luis Ceze, Alvin Cheung. Visual Road: A Video Data Management Benchmark, SIGMOD 2019

Brandon Haynes, Amrita Mazumdar, Armin Alaghi, Magdalena Balazinska, Luis Ceze, Alvin Cheung. LightDB: A DBMS for Virtual Reality Video. PVLDB, 11 (10): 1192-1205, 2018

Brandon Haynes, Artem Minyaylov, Magdalena Balazinska, Luis Ceze, Alvin Cheung. VisualCloud Demonstration: A DBMS for Virtual Reality. SIGMOD, 1615-1618, 2017. [Best Demonstration Honorable Mention]

This work is supported by the NSF through grants CCF-1703051, IIS-1546083, CCF-1518703, and CNS-1563788; DARPA award FA8750-16-2-0032; DOE award DE-SC0016260; a Google Faculty Research Award; an award from the University of Washington Reality Lab; gifts from the Intel Science and Technology Center for Big Data, Intel Corporation, Adobe, Amazon, Facebook, Huawei, and Google; and by CRISP, one of six centers in JUMP, a Semiconductor Research Corporation (SRC) program sponsored by DARPA.